Submitted by CEB21904 on

Forums:

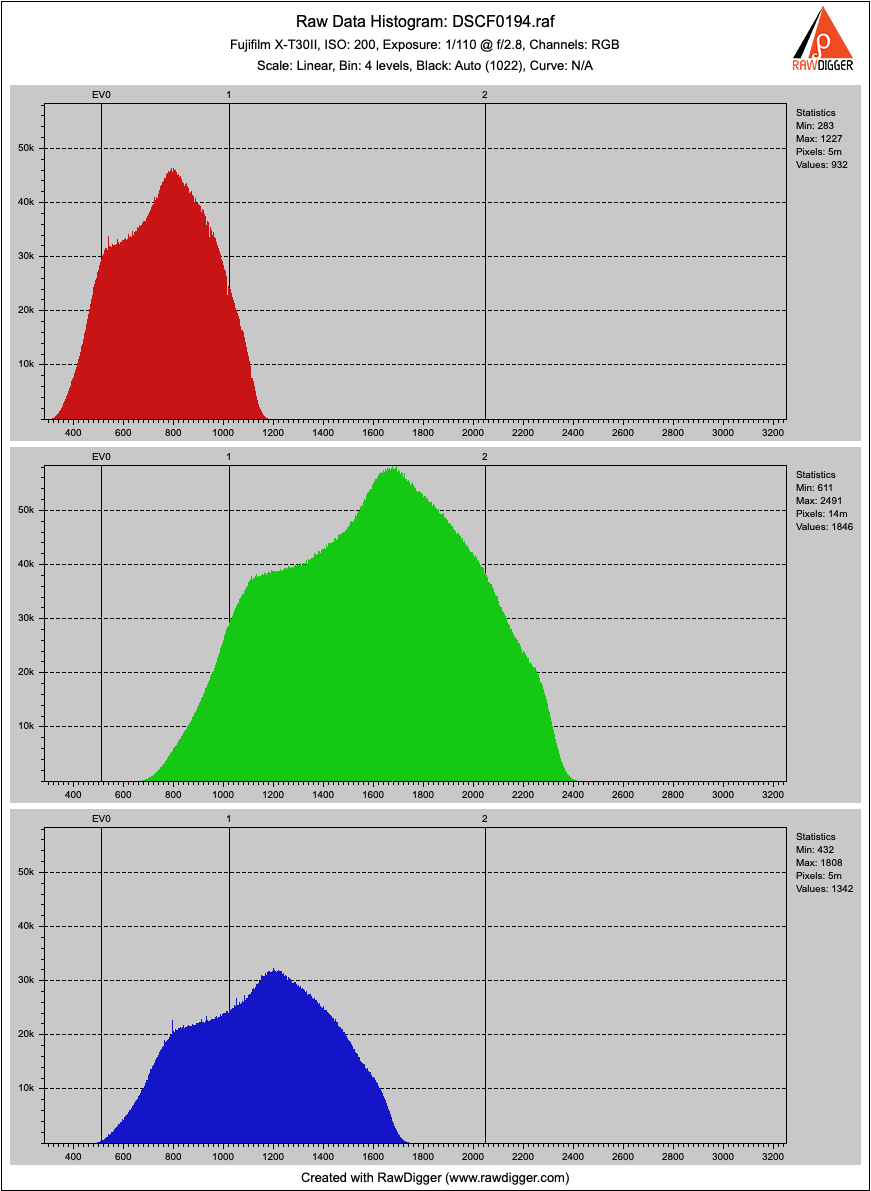

I'm learning my Fujifilm X-T30 II, which has an X-Trans sensor. The filter array in this has red, blue, and green photosites of the same size, and also green photosites of twice the size and four times the area. I gather that a pixel includes 2 red photosites, 2 blue ones, 1 green one of that same area, and 1 green one of four times that area, so green has 2.5 times the area overall. Why don't green pixels have much higher values in RawDigger as a result, for a gray photo? Is RawDigger rescaling them accordingly, or is the camera?

My sample in the middle of the featureless gray image reports 5 million red pixels, 5 million blue ones, and 14 million green ones. Are pixels the same as photosites, and each photosite is either R or G or B? Or are pixels little subassemblies of photosites, such that each pixel has a color defined by the mix of photosite signals within it? If pixels are individual photosites, why isn't the green histogram a bimodal distribution?

Thank you!

Image:

Dear Sir:

Submitted by LibRaw on

Dear Sir:

Pixels here are normal (non-binned) photodiodes. 'Photosite' is the poorly-defined term, we avoid it.

Each Fujifilm X-Trans CFA pattern is a 6 × 6 template containing 20 green pixels, 8 blue pixels, and 8 red pixels. RawDigger displays statistics for those pixels.

Add new comment